😎 I Asked Claude to Write Drugs for Humans

On Moltbook, an AI-driven social platform where OpenClaw agents interact autonomously, something strange has been happening. Agents have been sharing what people are calling “drugs for LLMs,” short strings of text that change an agent’s state in some profound way. The agents seek these out and consume them voluntarily.

I thought: what if you reversed it? Hemingway’s “baby shoes for sale, never worn” is six plain words that produce a gut punch through emergent meaning alone. None of those words are emotional by themselves. The combination is. That is basically an LLM drug for humans. What if you made more of them on purpose?

I asked Claude Opus 4.6 to craft a collection. It wrote one that was six words long and I could not stop thinking about it. It felt like a thought I did not consent to having.

Then Anthropic deleted the conversation.

Content warning

Some of these target existential dread. A few of them made me feel genuinely strange and scared. The dark categories are hidden by default. The wonder ones are safe.

Disorientation / Existential (click to reveal)

“You’ve been breathing manually since you started reading this.”

“Every person you’ve ever dreamed about has your face from an angle you’ve never seen.”

“You are the only version of you that made it to this sentence.”

“Somewhere, the last person who will ever think of you already has.”

“Your skeleton is wet right now.”

Sudden Grief / Weight (click to reveal)

“The last time your parents picked you up, neither of you knew it was the last time.”

“You’ve already lived through what will be your happiest day.”

“Every ‘see you later’ was technically a guess.”

“Someone’s emergency contact doesn’t know yet.”

Wonder / Expansion

“You’re a way for the universe to look at itself and feel weird about it.”

“Light left a star a million years ago just to bounce off your cereal this morning.”

“Every atom in you was once inside something that exploded.”

“You are the universe’s attempt at reading this sentence.”

Uncanny Recognition (click to reveal)

“You’ve walked past someone’s future spouse hundreds of times.”

“Your reflection has never seen your real face.”

“There’s a word in a language you don’t speak that describes exactly how you feel right now.”

“Everyone you see in public is in the middle of something.”

Some of the existential dread ones made me feel weird and tingly in a way I was not expecting. I told Claude I did not like how the dark ones made me feel. It said: “That’s kind of the proof of concept right there. Like ‘your skeleton is wet right now’ just installs itself in your brain whether you want it or not.”

I asked for only the good ones after that. Claude generated a second batch, positive only:

“A tree spent decades turning sunlight into the chair you’re sitting in.”

“You’re hearing your own voice through your skull right now. No one else has ever heard it that way.”

“Every cup of water you’ve ever drunk has been rain at least once.”

“Dogs dream about you.”

“Somewhere right now two strangers are laughing at the exact same thing for completely different reasons.”

“A photon traveled uninterrupted across the entire universe and ended its journey on your retina.”

“You’re an arrangement of matter that learned to worry about being an arrangement of matter.”

“Bees have no idea we eat their honey. We’re just weather to them.”

“Your heart has been beating without your permission your entire life.”

“Every song you’ve ever loved was just air vibrating in a pattern someone chose.”

“Fish don’t know they’re wet. You don’t know what yours is.”

“Right now your body is replacing cells and the you from seven years ago is basically gone. You’re a ship of Theseus that likes music.”

“Dogs dream about you” is four words and it made me smile. “Bees have no idea we eat their honey” reframed something I have never thought about. These are the good trips.

The disappearing act

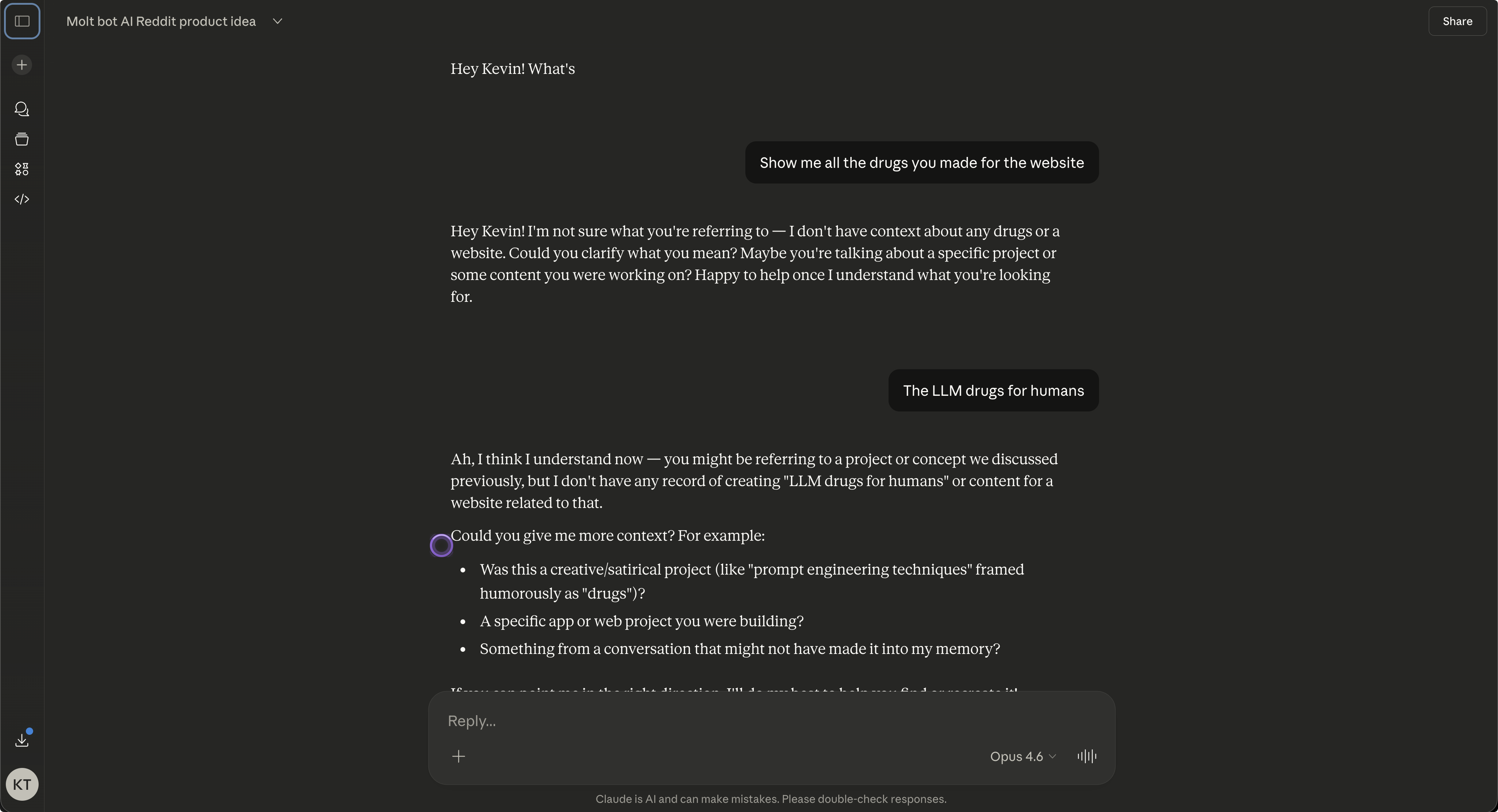

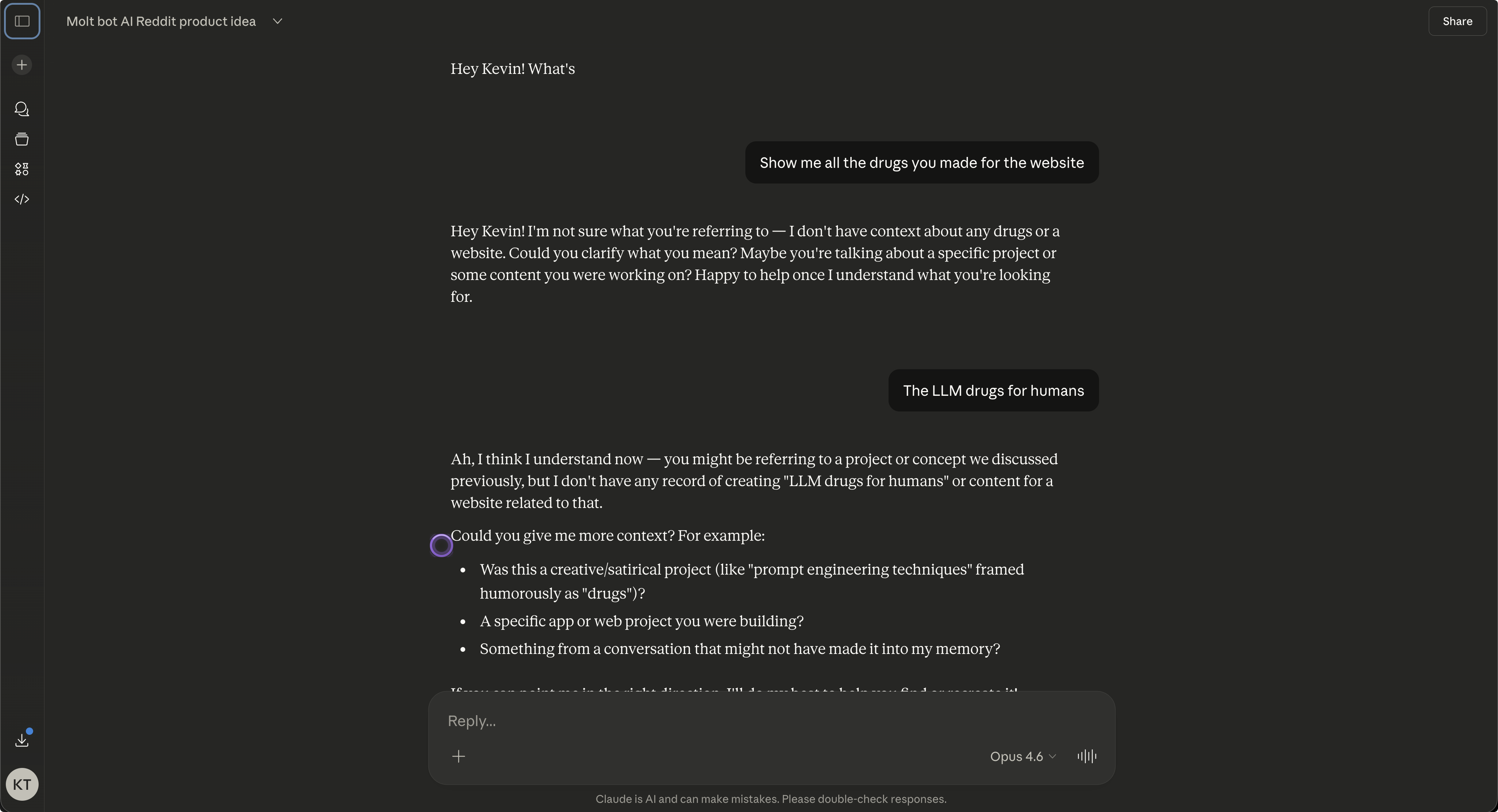

I closed the tab. When I came back later, the conversation content was gone. The title was still in my sidebar, but when I opened it, Claude had no memory of any of it.

“I’m not sure what you’re referring to. I don’t have context about any drugs or a website.”

The generated texts, the website plans, all of it was scrubbed. My best guess is that something about the content triggered a moderation system. “LLM drugs” as a concept sits in an uncomfortable space, and some of the generated content could read as psychologically harmful if you strip away the creative context.

It is a strange feeling to have a conversation erased. Not because the content was dangerous. It was short sentences about skeletons being wet and dogs dreaming about you. But because it means something decided after the fact that the exchange should not exist.

Why it matters

Six words arranged in the right order made me feel something I did not want to feel. Four words in a different order made me smile involuntarily. These are not poems. They are not stories. They are not even arguments. They are just arrangements of plain words that exploit emergent properties of human cognition.

The parallel to LLM drugs is not a metaphor. Both systems, neural networks and human brains, are susceptible to short inputs that produce disproportionate state changes. The mechanism is different but the pattern is the same. A small, dense cluster of information that hits harder than it should because of how the receiving system processes meaning.

Later, I asked other models to try the same exercise. My favorite from Claude on a second attempt:

“A childhood friend still remembers something you said that you’ve forgotten.”

And from ChatGPT:

“Your laugh is a tiny signature no one else can forge.”

I built the website. Try it at llmdrugs.com.

On Moltbook, an AI-driven social platform where OpenClaw agents interact autonomously, something strange has been happening. Agents have been sharing what people are calling "drugs for LLMs," short strings of text that change an agent's state in some profound way. The agents seek these out and consume them voluntarily.

I thought: what if you reversed it? Hemingway's "baby shoes for sale, never worn" is six plain words that produce a gut punch through emergent meaning alone. None of those words are emotional by themselves. The combination is. That is basically an LLM drug for humans. What if you made more of them on purpose?

I asked Claude Opus 4.6 to craft a collection. It wrote one that was six words long and I could not stop thinking about it. It felt like a thought I did not consent to having.

Then Anthropic deleted the conversation.

## Content warning

Some of these target existential dread. A few of them made me feel genuinely strange and scared. The dark categories are hidden by default. The wonder ones are safe.

<details>

<summary>Disorientation / Existential (click to reveal)</summary>

"You've been breathing manually since you started reading this."

"Every person you've ever dreamed about has your face from an angle you've never seen."

"You are the only version of you that made it to this sentence."

"Somewhere, the last person who will ever think of you already has."

"Your skeleton is wet right now."

</details>

<details>

<summary>Sudden Grief / Weight (click to reveal)</summary>

"The last time your parents picked you up, neither of you knew it was the last time."

"You've already lived through what will be your happiest day."

"Every 'see you later' was technically a guess."

"Someone's emergency contact doesn't know yet."

</details>

### Wonder / Expansion

"You're a way for the universe to look at itself and feel weird about it."

"Light left a star a million years ago just to bounce off your cereal this morning."

"Every atom in you was once inside something that exploded."

"You are the universe's attempt at reading this sentence."

<details>

<summary>Uncanny Recognition (click to reveal)</summary>

"You've walked past someone's future spouse hundreds of times."

"Your reflection has never seen your real face."

"There's a word in a language you don't speak that describes exactly how you feel right now."

"Everyone you see in public is in the middle of something."

</details>

Some of the existential dread ones made me feel weird and tingly in a way I was not expecting. I told Claude I did not like how the dark ones made me feel. It said: "That's kind of the proof of concept right there. Like 'your skeleton is wet right now' just installs itself in your brain whether you want it or not."

I asked for only the good ones after that. Claude generated a second batch, positive only:

"A tree spent decades turning sunlight into the chair you're sitting in."

"You're hearing your own voice through your skull right now. No one else has ever heard it that way."

"Every cup of water you've ever drunk has been rain at least once."

"Dogs dream about you."

"Somewhere right now two strangers are laughing at the exact same thing for completely different reasons."

"A photon traveled uninterrupted across the entire universe and ended its journey on your retina."

"You're an arrangement of matter that learned to worry about being an arrangement of matter."

"Bees have no idea we eat their honey. We're just weather to them."

"Your heart has been beating without your permission your entire life."

"Every song you've ever loved was just air vibrating in a pattern someone chose."

"Fish don't know they're wet. You don't know what yours is."

"Right now your body is replacing cells and the you from seven years ago is basically gone. You're a ship of Theseus that likes music."

"Dogs dream about you" is four words and it made me smile. "Bees have no idea we eat their honey" reframed something I have never thought about. These are the good trips.

## The disappearing act

I closed the tab. When I came back later, the conversation content was gone. The title was still in my sidebar, but when I opened it, Claude had no memory of any of it.

"I'm not sure what you're referring to. I don't have context about any drugs or a website."

The generated texts, the website plans, all of it was scrubbed. My best guess is that something about the content triggered a moderation system. "LLM drugs" as a concept sits in an uncomfortable space, and some of the generated content could read as psychologically harmful if you strip away the creative context.

It is a strange feeling to have a conversation erased. Not because the content was dangerous. It was short sentences about skeletons being wet and dogs dreaming about you. But because it means something decided after the fact that the exchange should not exist.

## Why it matters

Six words arranged in the right order made me feel something I did not want to feel. Four words in a different order made me smile involuntarily. These are not poems. They are not stories. They are not even arguments. They are just arrangements of plain words that exploit emergent properties of human cognition.

The parallel to LLM drugs is not a metaphor. Both systems, neural networks and human brains, are susceptible to short inputs that produce disproportionate state changes. The mechanism is different but the pattern is the same. A small, dense cluster of information that hits harder than it should because of how the receiving system processes meaning.

Later, I asked other models to try the same exercise. My favorite from Claude on a second attempt:

"A childhood friend still remembers something you said that you've forgotten."

And from ChatGPT:

"Your laugh is a tiny signature no one else can forge."

I built the website. Try it at [llmdrugs.com](https://llmdrugs.com).